Chatgpt In Enterprise Use: Legal Issues?

14 Jun, 20234 minutesChatGPT has completely taken over the professional world in the last few months. People use ...

ChatGPT has completely taken over the professional world in the last few months. People use the artificial intelligence powered chatbot to do administrative tasks, write long texts like letters and essays, create resumes and much more because of its ability to generate content and answer almost any request.

Research shows that 46% of professionals use ChatGPT to complete tasks at work. According to the results of another survey, 45% of workers believe that using ChatGPT helps them do their jobs more effectively.

Still, workers seem to ignore one negative aspect of artificial intelligence (AI) software. Many companies are concerned that their employees could share private company data with AI chatbots like ChatGPT, which could be used by cybercriminals. If employees use ChatGPT to automatically generate content, the question of copyright also arises.

AI technologies can even be biased and discriminatory, which can be extremely problematic for companies that use them to screen job applicants or respond to customer inquiries. Many experts have questioned the security and legal implications of using ChatGPT in the workplace due to these issues.

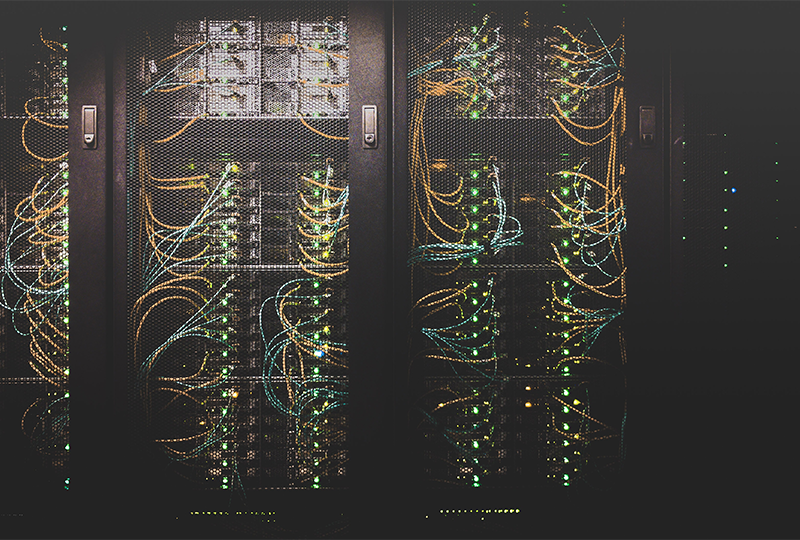

Data Security Risks

According to Neil Thacker, Chief Information Security Officer (CISO) at Netskope, the increasing use of generative AI technologies in the workplace is making organizations more vulnerable to significant data leaks.

He points to the fact that OpenAI, the company behind ChatGPT, trains its models using data and queries stored on its servers. However, if cybercriminals break into OpenAI's systems, they could have access to "sensitive and proprietary data" that would be "harmful" to businesses.

In an effort to improve privacy, OpenAI has since added "opt-out" and "disabling history" options, but Thacker says users still have to actively select these.

According to Thacker, "there are currently few assurances about how companies whose products use generative AI will process and store data", although legislation such as the UK Data Protection Act and the European Union's proposed AI law are steps in the right direction, regarding the regulation of software like ChatGPT.

Ban AI?

Businesses can choose to ban the use of AI services in the workplace if they are concerned about the security and compliance issues involved. However, Thacker warns that this can backfire.

The use of unauthorized third-party AI services outside of the company's control, known as "shadow AI," would likely lead to a ban on AI services in the workplace, Thacker said, which wouldn't solve the problem.

AI is more valuable when paired with human intelligence.

The ulitmate responsibility for ensuring employees use AI tools safely and responsibly rests with those responsible for security. To do that, they need to know where sensitive information is stored once it's entered into third-party systems, who has access to that data, how it's being used, and how long it's being retained.

Businesses should be aware that employees will leverage generative AI integration offerings from reputable enterprise platforms like Teams, Slack, Zoom, and others. Employees should also be advised that using these services with the default settings may result in the disclosure of sensitive information to third parties.

Safe use of AI tools in the workplace

Anyone using ChatGPT and other AI tools at work could inadvertently violate copyright law, which could expose their business to costly legal action and penalties.

Because ChatGPT generates papers from information already recorded and stored on the internet, some of the data used may inevitably be subject to copyright.

Organizations face the problem - and risk - of not knowing when employees have infringed someone else's copyright because they are unable to verify the source of the information.

If organizations are to experiment with AI in a safe and ethical manner, it is important that security and HR teams develop and implement very clear policies on when, how and in what situations it can be used.

Organizations can choose to use AI "for internal purposes only" or "in certain external contexts". The IT security team must ensure that once the company has established these permissions, they then, as far as technically possible, prevent any further use of ChatGPT.

Fake Chatbots

Cyber criminals are capitalizing on the growing enthusiasm for ChatGPT and Generative AI by developing fake chatbots designed to steal data from unsuspecting users.

Many of these knock-off chatbot applications claim to use the public ChatGPT API, but some of them use their own giant language models. However, these fake chatbots often pose as weak copies of ChatGPT or are actually malicious subterfuges for collecting private or sensitive information.

CISOs should consider using software like a cloud access service broker if they are particularly concerned about ChatGPT's privacy impact (CASB).

Granular SaaS application controls mean employees are granted access to business-critical applications while restricting or disabling access to high-risk applications like generative AI. Finally, consider sophisticated data security that detects and prevents accidental leaks of company secrets into generative AI programs by classifying data using machine learning.

Impact on the reliability of the data

The trustworthiness of the data used for ChatGPT's algorithms is another major issue that doesn't just affect copyright and cybersecurity. One warns that even small mistakes make the results implausible.

Concerns about audibility and compliance are growing as professionals attempt to use AI and chatbots in the workplace. Therefore, the application and execution of these evolving technologies must be well thought out, especially with regard to the source and the quality of the data used to build and feed the models.

Applications that use generative AI collect data from websites across the internet and use it to respond to user requests. However, since not all online content is accurate, there is a chance that tools like ChatGPT may spread false information.

According to Verschuren, to combat misinformation, developers of generative AI software should ensure that data only comes from reputable, licensed, and constantly updated sources. This is why the human experience is so important, as AI cannot decide which sources to use or how to access them.

Use ChatGPT responsibly

Organizations need to be able to take a number of steps to ensure their employees are using this technology wisely and safely. The first measure is the consideration of data protection laws.

Organizations must comply with laws like the CCPA and GDPR. Establish robust procedures for handling data, such as obtaining user consent, restricting data collection, and encrypting sensitive data. For example, to protect patient privacy, a healthcare organization using ChatGPT must treat patient data in accordance with the Data Protection Act.

Companies using ChatGPT should consider intellectual property rights. This is because ChatGPT is basically a content-creation tool. For proprietary and copyrighted material, companies should establish clear policies about ownership and usage rights.

Organizations can avoid disputes and unauthorized use of intellectual property by clearly identifying ownership. For example, a media company using ChatGPT must prove that it is the legal owner of articles or other artwork created by the AI; however, this is open to interpretation.

We offer a dedicated, specialized staff who can discuss your hiring needs if you are looking to hire for cloud positions or alternatively help you find the ideal role if you are searching for a cloud-based role. Click here to reach out to us right away.